[ad_1]

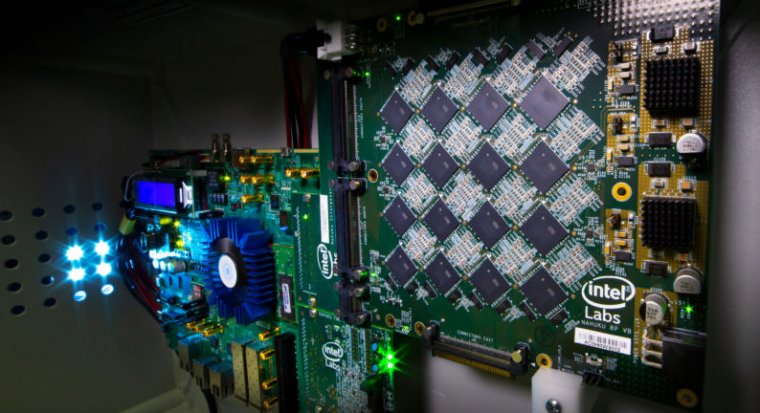

Enlarge / This is a picture of an Intel Nahuku board, which can contain 8 to 32 Loihi neuromorphic processing units, interfaced to an Intel Arria 10 FPGA development kit. Intel’s latest neuromorphic system, Pohoiki Beach, is made up of multiple Nahuku boards and contains 64 Loihi chips. (credit: Intel Labs)

Neuromorphic engineering—building machines that mimic the function of organic brains in hardware as well as software—is becoming more and more prominent. The field has progressed rapidly, from conceptual beginnings in the late 1980s to experimental field programmable neural arrays in 2006, early memristor-powered device proposals in 2012, IBM’s TrueNorth NPU in 2014, and Intel’s Loihi neuromorphic processor in 2017. Yesterday, Intel broke a little more new ground with the debut of a larger-scale neuromorphic system, Pohoiki Beach, which integrates 64 of its Loihi chips.

Intel’s Jon Tse demonstrates teaching a single Loihi chip to identify new objects in just a few seconds each.

Where traditional computing works by running numbers through an optimized pipeline, neuromorphic hardware performs calculations using artificial “neurons” that communicate with each other. This is a workflow that’s highly specialized for specific applications, much like the natural neurons it mimics in function—so you likely won’t replace conventional computers with Pohoiki Beach systems or its descendants, for the same reasons you wouldn’t replace a desktop calculator with a human mathematics major.

However, neuromorphic hardware is proving able to handle tasks organic brains excel at much more efficiently than conventional processors or GPUs can. Visual object recognition is perhaps the most widely realized task where neural networks excel, but other examples include playing foosball, adding kinesthetic intelligence to prosthetic limbs, and even understanding skin touch in ways similar to how a human or animal might understand it.

Read 3 remaining paragraphs | Comments

[ad_2]

Source link

Related Posts

- What to know about measles in the US as case count breaks record

- NASA to perform key test of the SLS rocket, necessitating a delay in its launch

- Fiber-guided atoms preserve quantum states—clocks, sensors to come

- Trump administration puts offshore drilling expansion in Arctic, Atlantic on ice

- The antibiotics industry is broken—but there’s a fix